Project Overview

This case study documents the design and rollout of an enterprise-grade Conversational AI service built on KORE.ai, supporting regulated environments such as banking and large-scale enterprise operations. From a product design leadership perspective, the challenge was not to “design a chatbot,” but to define a new enterprise interaction model—one where conversation becomes a primary interface for executing complex, high-risk tasks. The success of this initiative depended on aligning user experience, AI capability, compliance requirements, and organizational governance into a single, coherent service. Design decisions were evaluated not only on usability, but on risk, trust, scalability, and long-term operational viability.

PROBLEM

As VP of UX Design, I led efforts to resolve experience failures caused by legacy, fragmented systems built around internal processes instead of user workflows. This forced agents and operations teams to navigate complex, disconnected tasks, increasing inefficiency and risk.

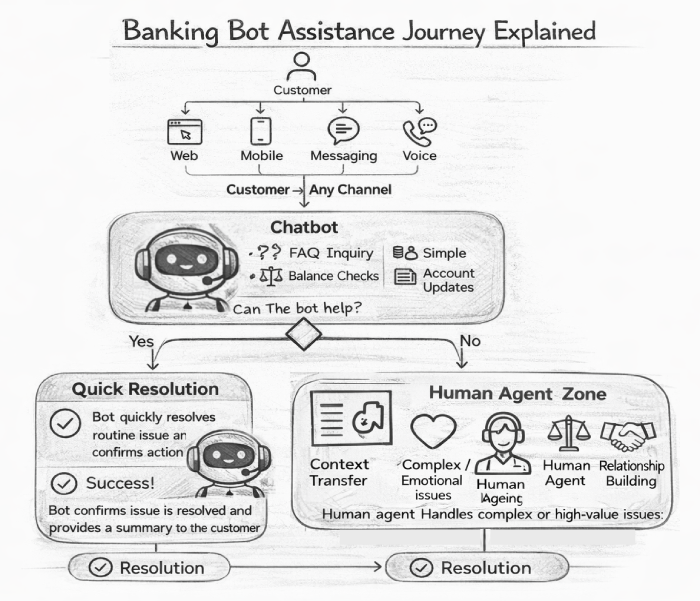

The primary UX challenge was cognitive overload. Users depended on memory and personal expertise to compensate for inconsistent system behavior, leading to errors and reduced reliability. Siloed conversational AI implementations further eroded trust by behaving inconsistently across domains. From a governance standpoint, missing confirmations, explainability, and audit trails created regulatory risk, positioning Conversational AI as an unreliable support tool rather than a trusted enterprise capability.

Experience & Product Goals

- Reduce cognitive load by guiding users through complex tasks with clear, step-by-step conversational flows.

- Ensure consistent behavior across domains so users can build reliable mental models and trust system responses.

- Embed trust and transparency hrough explicit intent validation, confirmations, and explainable actions.

- Minimize regulatory riskby making auditability and traceability native to the experience.

- Scale as an enterprise capabilityusing shared UX standards that balance team autonomy with governance.

MY ROLE

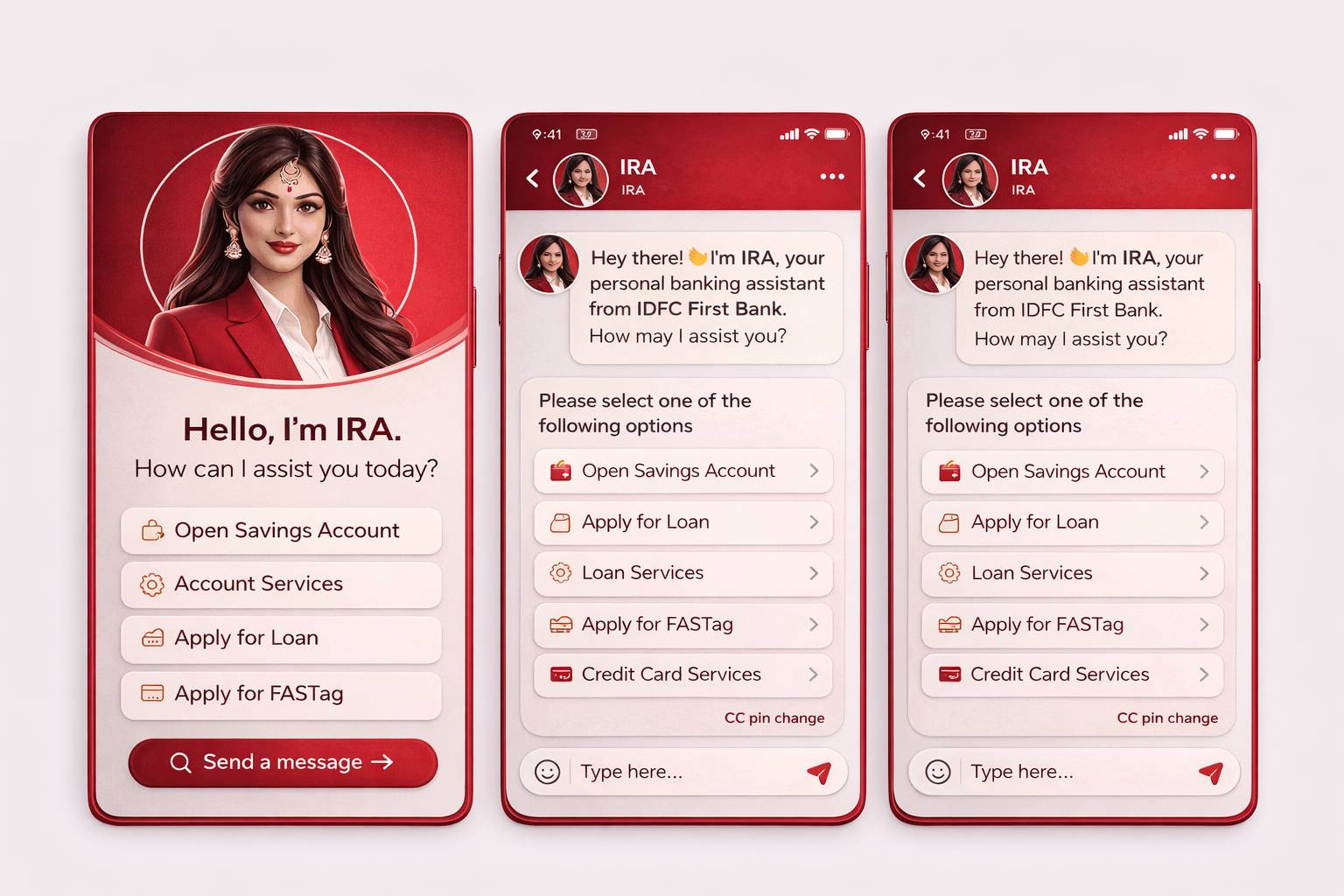

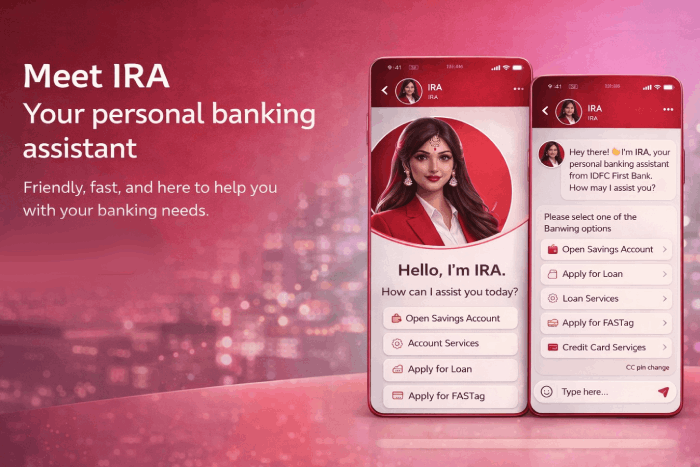

When the organization scaled IRA-focused Conversational AI, I took on end-to-end accountability as VP of UX for both the experience and the operating model. The mandate was to deliver a trusted conversational capability for high-stakes financial use cases while meeting strict regulatory and platform constraints within the Phenom ecosystem.

I defined the conversational experience vision and governance framework, ensuring decisions were consistently reviewed and approved across internal teams and partners. As the final authority on experience decisions, I aligned business goals, IRA compliance, AI platform capabilities, and user needs—enabling Conversational AI to scale as a trusted, auditable enterprise service rather than an experimental feature.

Responsibilities

- Defined the Conversational UX strategy and experience direction for the bot across candidate and employee-facing journeys

- Governed internal teams and vendors to ensure alignment with conversational UX standards, accessibility guidelines, and regulatory compliance

- Established intent, conversation, and decision frameworks to reduce ambiguity, inconsistency, and rework across bot use cases

- Enabled business, product, and engineering teams with clear Conversational UX processes, ownership models, and design guardrails

RSEARCH & INSIGHT

Research shaped every aspect of the IRA Conversational AI experience. In high-stakes financial contexts, users prioritized trust, control, and clarity over speed, leading us to design structured, step-by-step conversations with deliberate confirmations for critical actions.

To support predictability, we standardized intents, responses, confirmations, and fallback patterns across IRA workflows, enabling consistent behavior and reliable mental models. Transparency was built in through clear system signaling and plain-language explanations of actions and outcomes.

Finally, recognizing a low tolerance for failure, we designed error recovery and human handoff as first-class experiences, reinforcing trust rather than treating them as exceptions.

PERSONA

As VP of UX, I defined personas and user journeys to reflect the real operational roles interacting with the conversational service. Personas were grounded in responsibility, risk exposure, and decision authority rather than demographics, ensuring design decisions aligned with how agents, operations teams, supervisors, and compliance stakeholders actually worked.

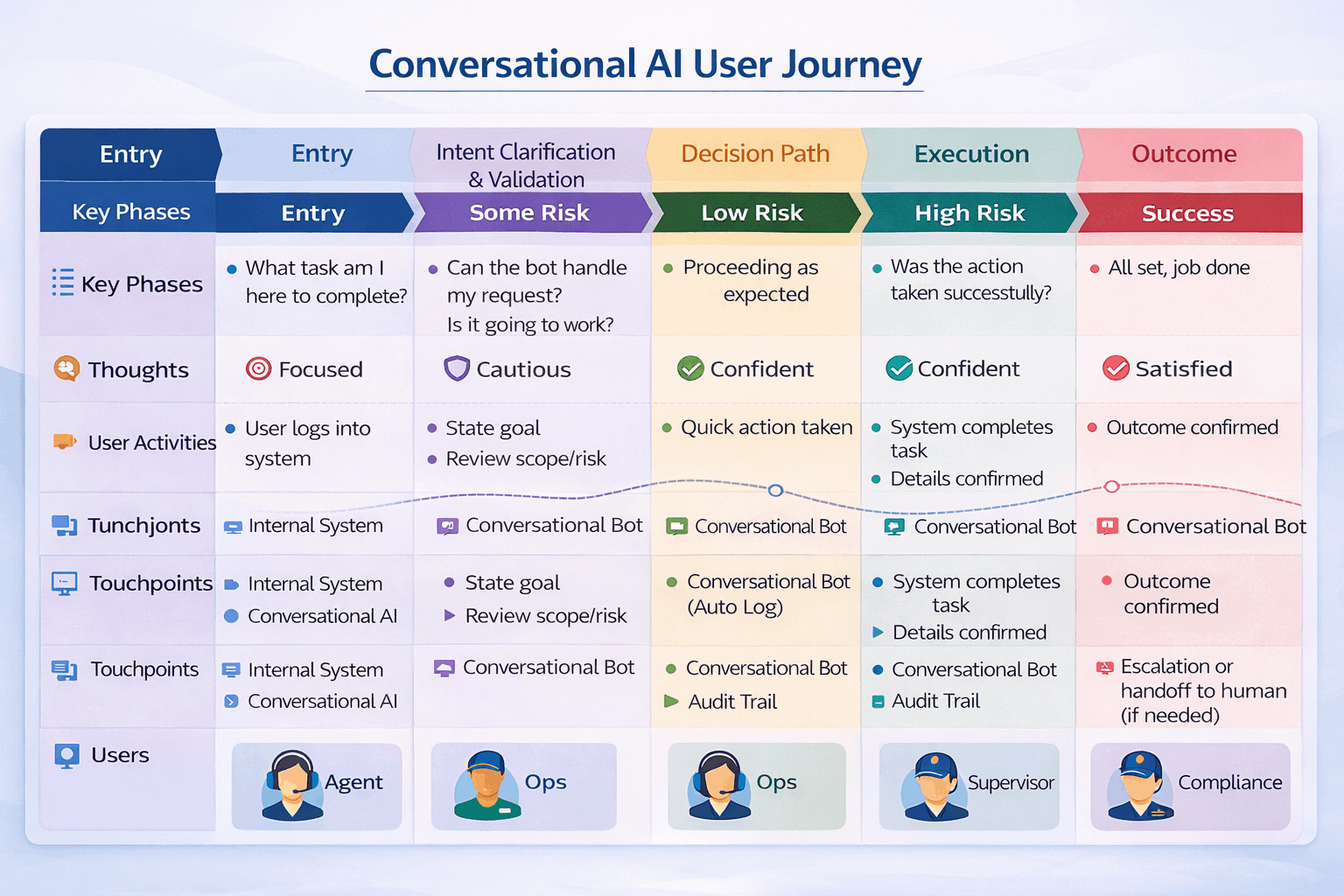

User Journey Map

Candidates discover and evaluate roles based on clarity, relevance, and trust in the employer brand. They assess growth signals, eligibility, and role fit, then complete a frictionless application aligned to their context and device. Post-application communication and visibility play a critical role in shaping confidence and long-term employer perception.

AFFINITY MAPPING & Time-Constrained

Candidates discover and evaluate roles based on clarity, relevance, and trust in the employer brand. They assess growth signals, eligibility, and role fit, then complete a frictionless application aligned to their context and device. Post-application communication and visibility play a critical role in shaping confidence and long-term employer perception.

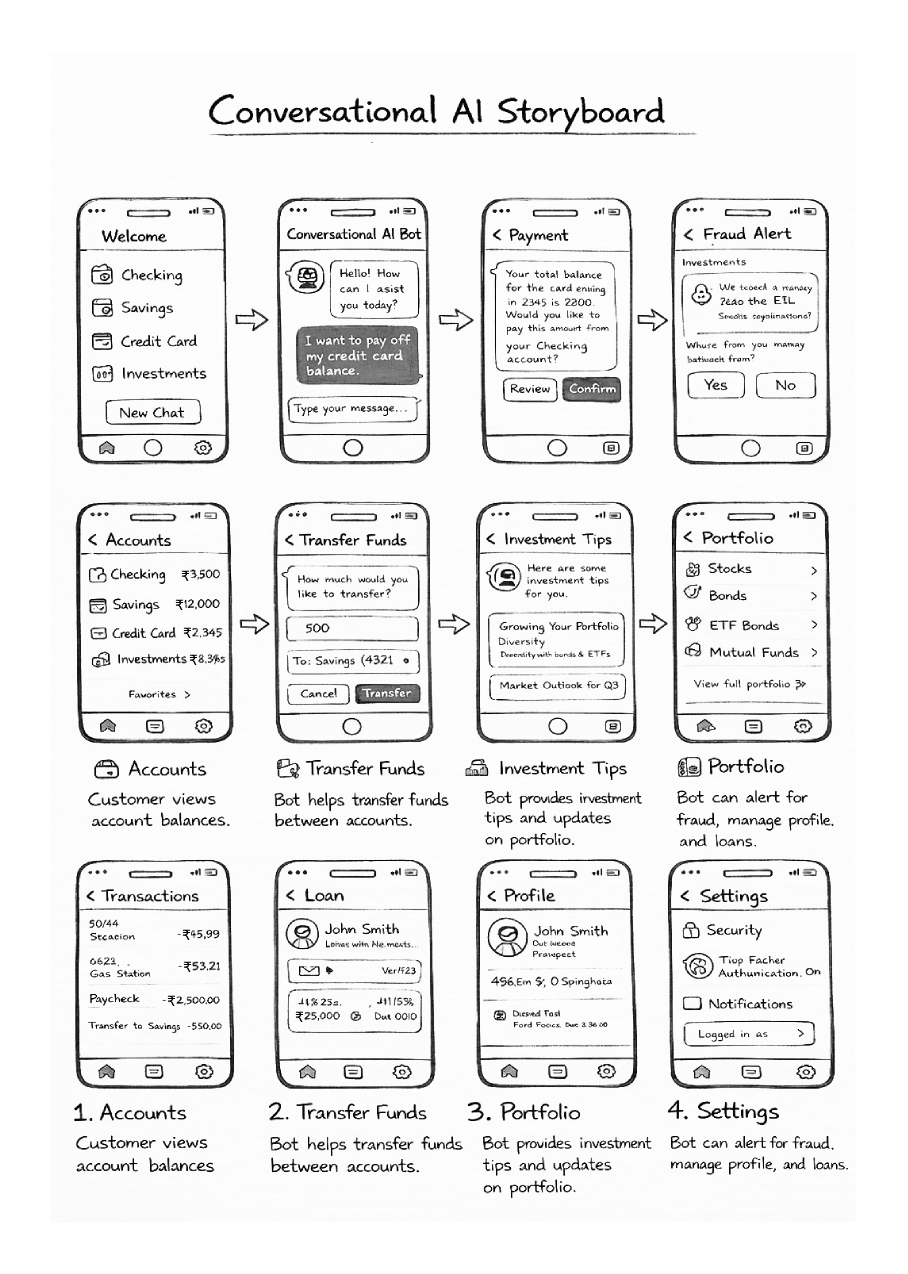

STORYBOARDING

Storyboards were leveraged to isolate and validate the highest-impact moments in the candidate journey, ensuring early alignment across stakeholders and reducing downstream rework during vendor implementation.

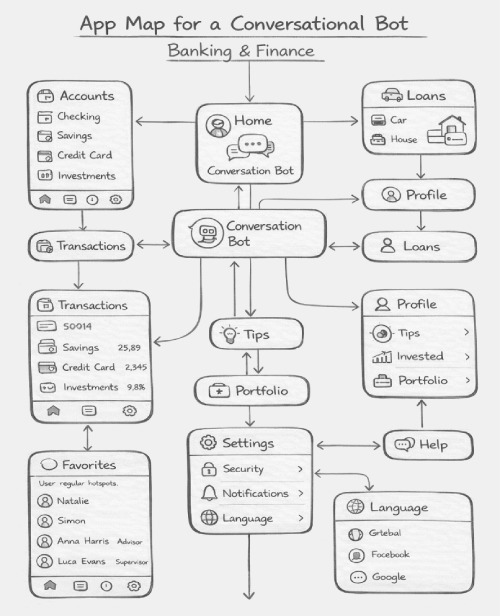

APP MAP

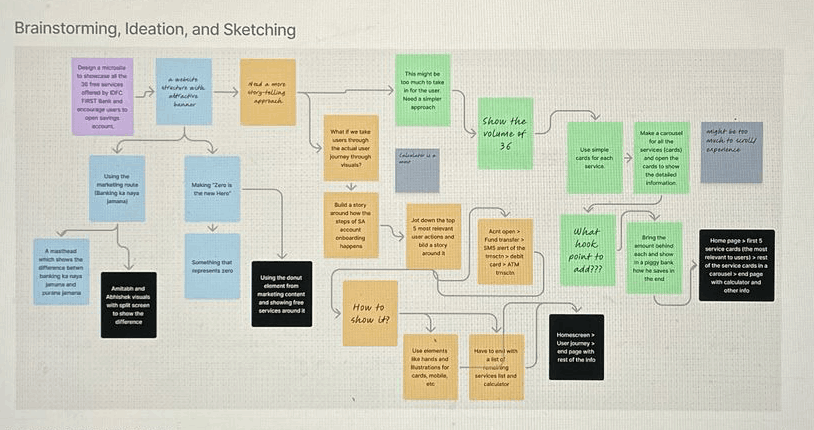

The application map was developed through guided brainstorming sessions, where I helped teams evaluate multiple structural approaches and converge on an information architecture that balanced simplicity, scalability, and usability across candidate journeys.

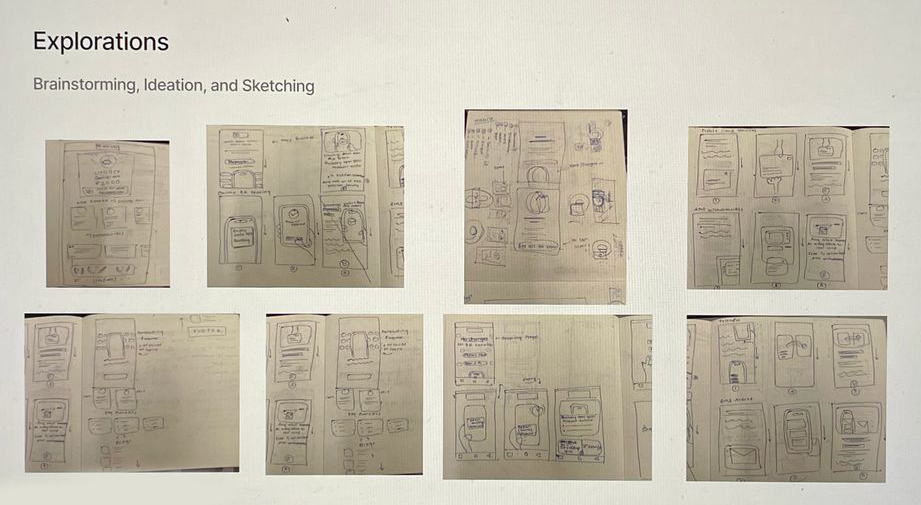

PAPER WIREFRAME

The application map was developed through guided brainstorming sessions, where I helped teams evaluate multiple structural approaches and converge on an information architecture that balanced simplicity, scalability, and usability across candidate journeys.

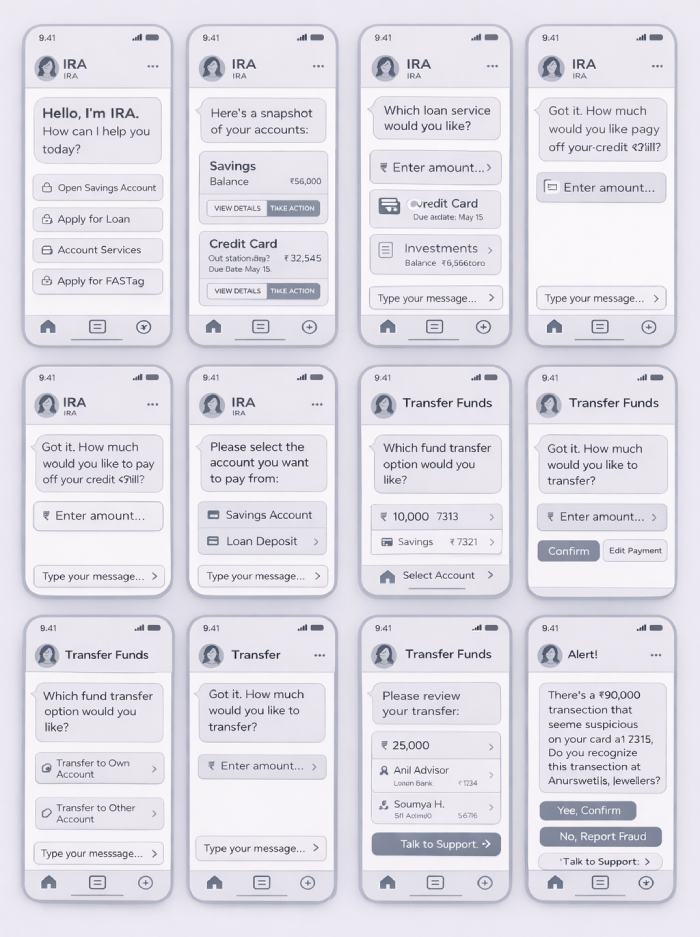

DIGITAL WIREFRAME

The application map was developed through guided brainstorming sessions, where I helped teams evaluate multiple structural approaches and converge on an information architecture that balanced simplicity, scalability, and usability across candidate journeys.

Conversational UX Framework

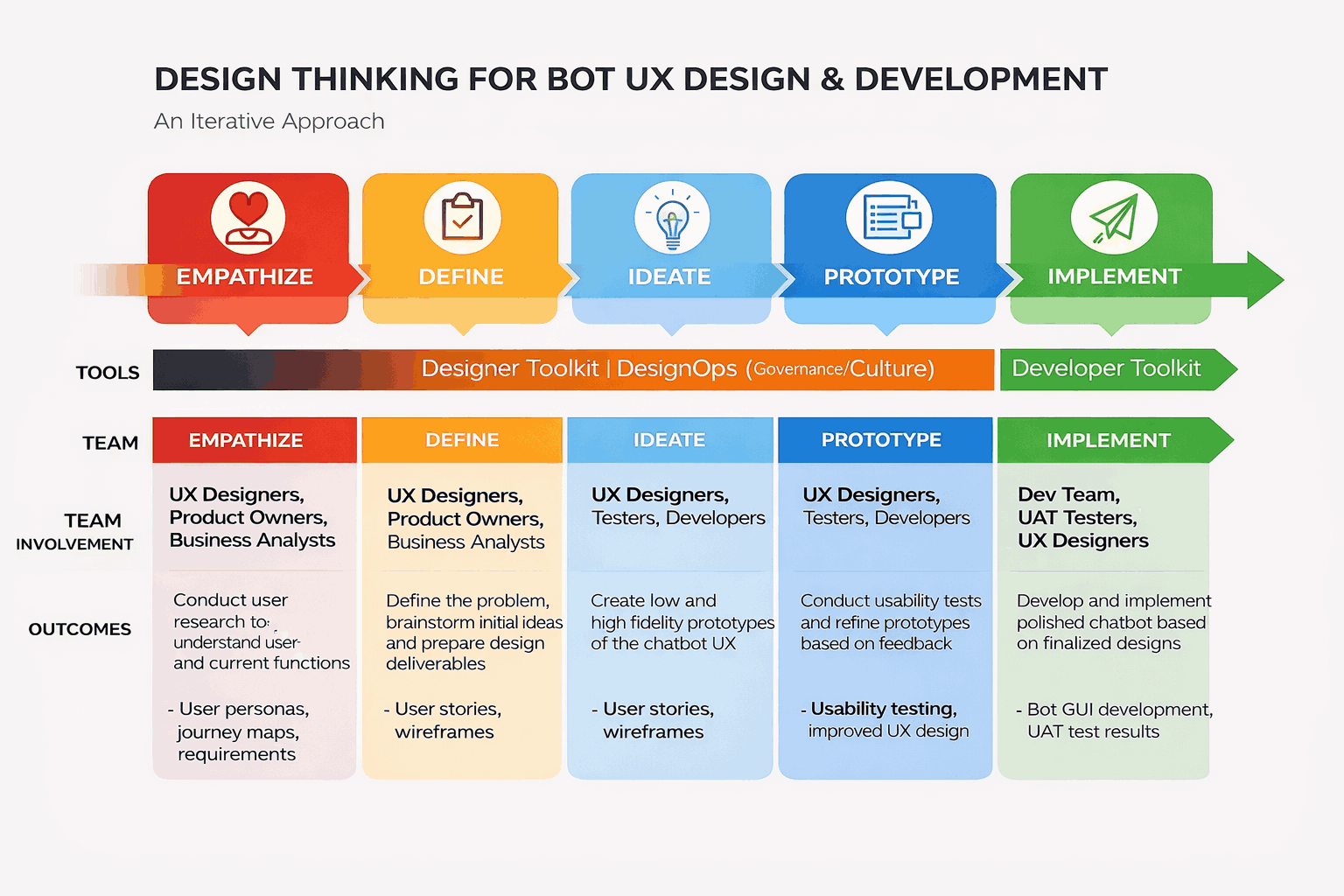

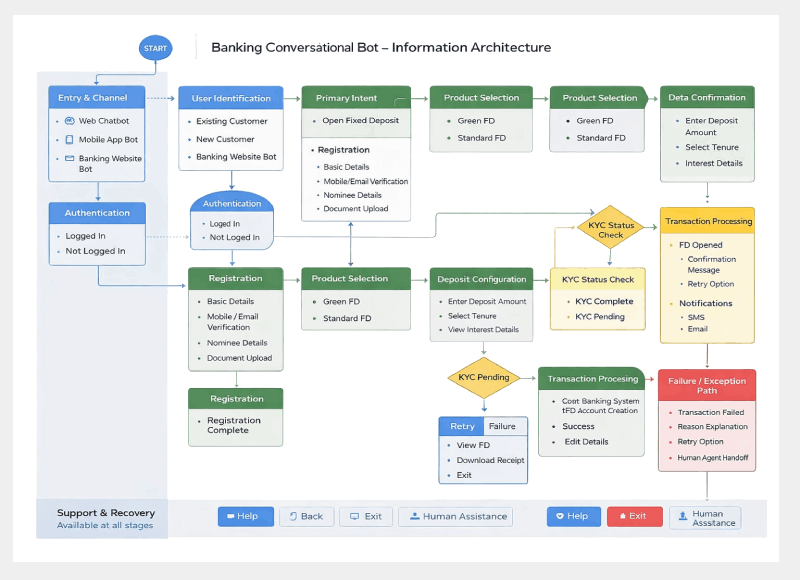

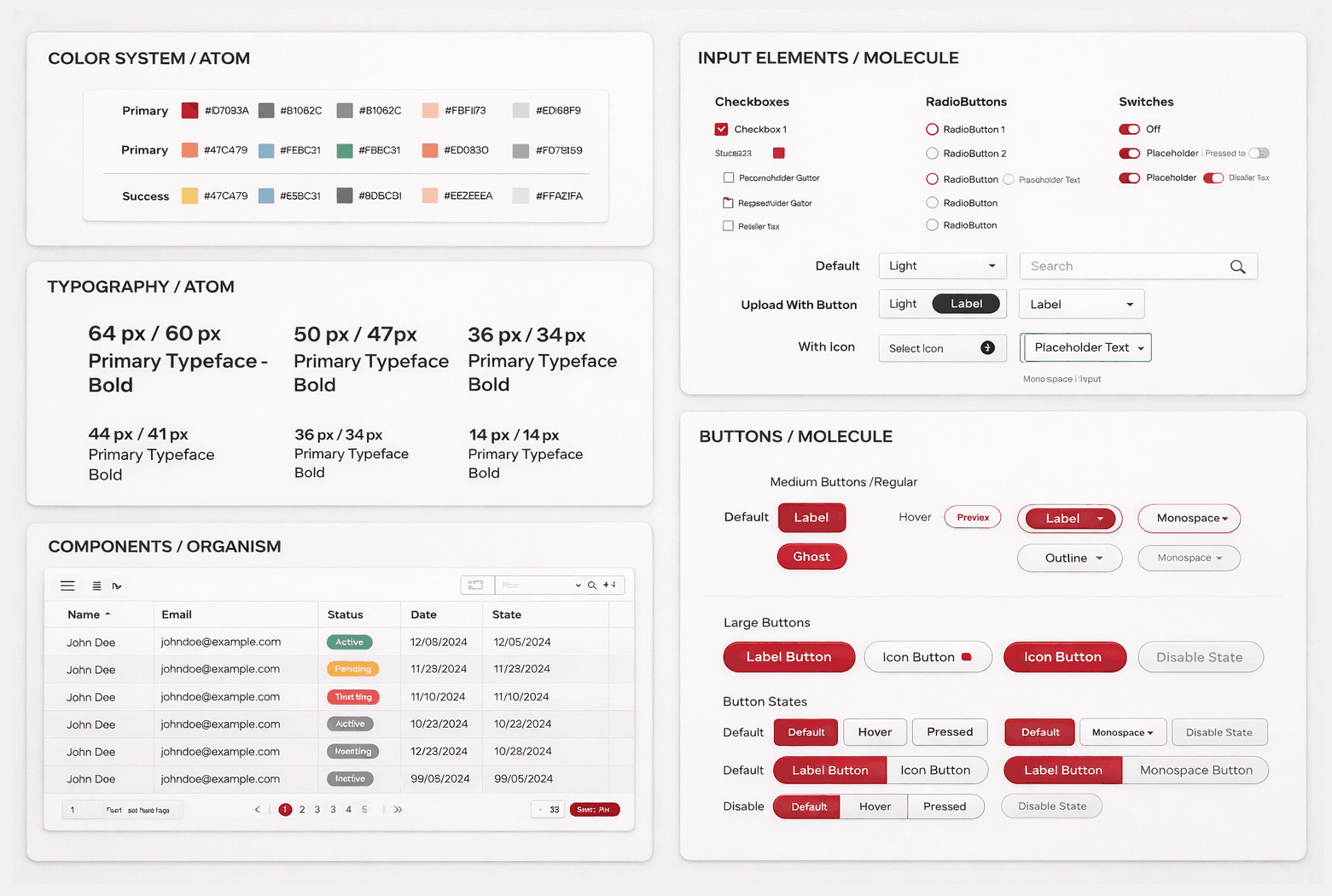

As UX lead, I established a Conversational UX Framework to standardize how conversational services are designed, governed, and scaled across banking,and enterprise use cases on KORE.ai. The framework treated conversation design as shared enterprise infrastructure rather than isolated chatbot solutions, enabling consistency, reuse, and long-term scalability.

At its core, the framework defined common conversation patterns, including dialog structure, turn management, confirmations, error recovery, and escalation. A unified, function-aligned intent taxonomy ensured predictable behavior, enabled analytics, and reduced duplication across teams. For regulated workflows, the framework enforced progressive disclosure and explicit validation, balancing usability with compliance and risk management. Explainability and audit readiness were embedded through transparent system responses and traceable conversation logs. Standardized fallback and human-handoff patterns ensured seamless continuity when automation thresholds were reached.

This approach allowed multiple teams to build independently while delivering coherent, compliant, and trustworthy conversational experiences across the organization.

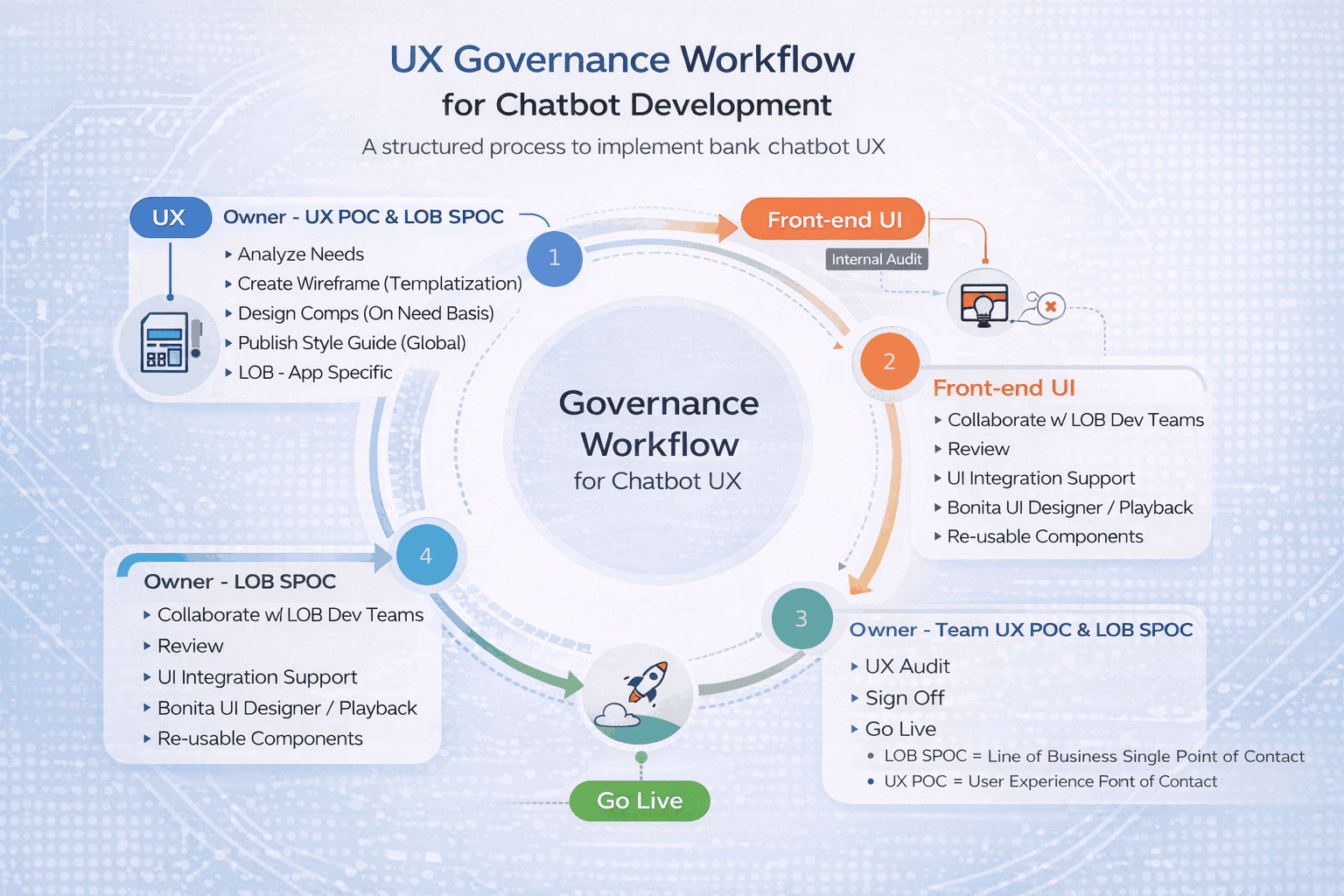

UX Governance Model

A centralized Conversational UX Governance Model was established to ensure quality, consistency, and compliance across conversational services without slowing delivery. The model balanced enterprise accountability with team autonomy by defining clear UX and compliance guardrails while allowing domain teams to build independently.

Core conversation standards and risk controls were centrally maintained, while implementation remained flexible at the domain level. Governance was lightweight and embedded into delivery workflows, focusing on systemic risks such as intent clarity, confirmations, and escalation paths rather than surface-level design. Continuous improvement was driven through analytics and shared learnings, enabling the organization to scale conversational services while maintaining trust and consistency.

Final Demo